Deploy a data plane with Helm in Enterprise Flex

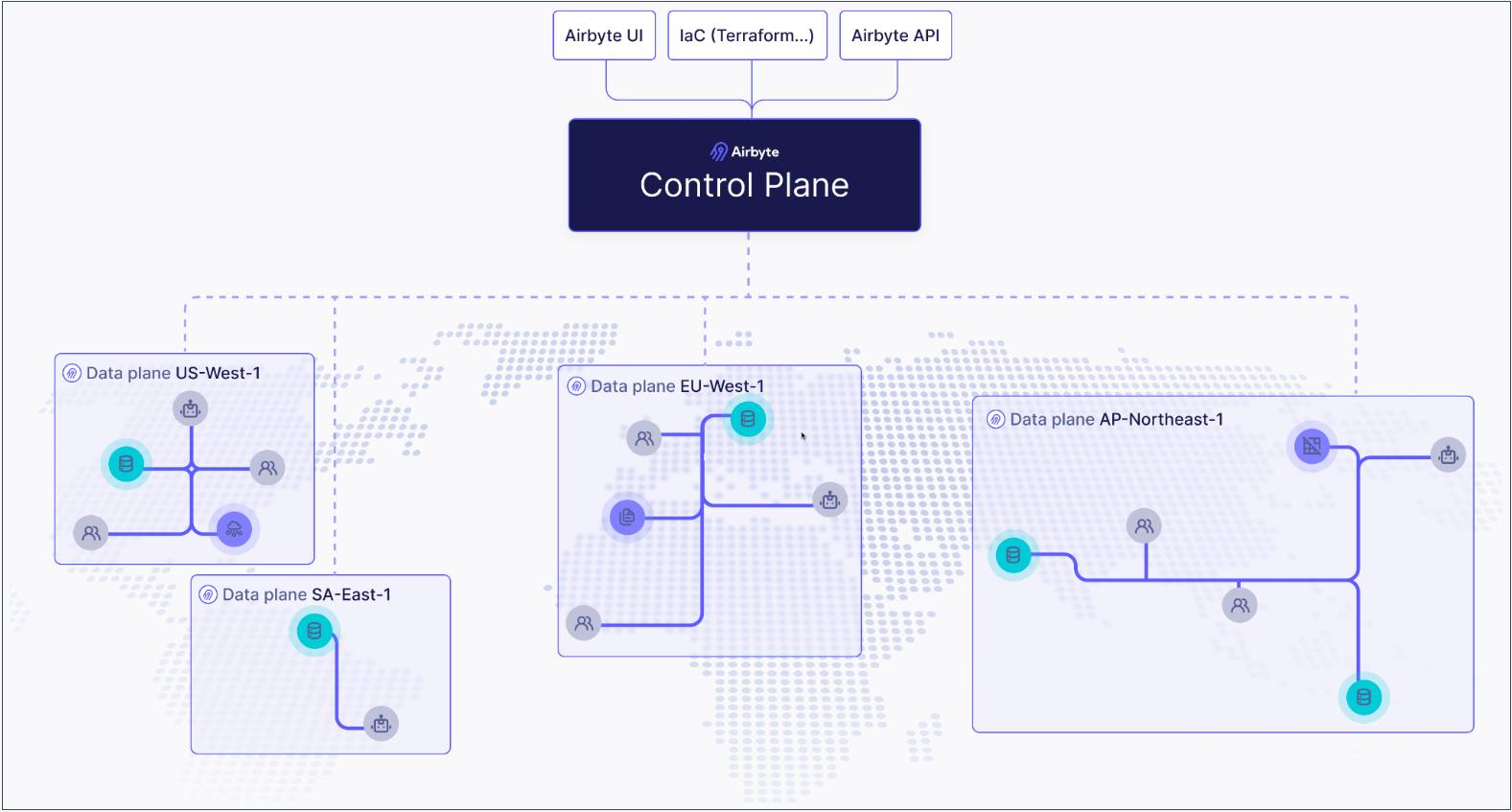

Airbyte Enterprise Flex customers can use Airbyte's public API to define regions and create independent data planes that operate in those regions. This ensures you're satisfying your data residency and governance requirements with a single Airbyte Cloud deployment, and it can help you reduce data egress costs with cloud providers.

How it works

If you're not familiar with Kubernetes, think of the control plane as the brain and data planes as the muscles doing work the brain tells them to do.

- The control plane is responsible for Airbyte's user interface, APIs, Terraform provider, and orchestrating work. Airbyte manages this for you in the cloud, reducing the time and resources it takes to start moving your data.

- The data plane initiates jobs, syncs data, completes jobs, and reports its status back to the control plane. We offer cloud regions equipped to do this for you, but you also have the flexibility to deploy your own to keep sensitive data protected or meet local data residency requirements.

This separation of duties is what allows a single Airbyte deployment to ensure your data remains segregated and compliant.

By default, Airbyte has a single data plane that any workspace in the organization can access, and it's automatically tied to the default workspace when Airbyte first starts. To configure additional data planes and regions, complete these steps.

If you have not already, ensure you have the required infrastructure to run your data plane.

- Create a region.

- Create a data plane in that region.

- Configure Kubernetes secrets.

- Create your values.yaml file.

- Deploy your data plane.

- Associate your region to an Airbyte workspace. You can tie each workspace to exactly one region.

Prerequisites

Before you begin, make sure you've completed the following:

-

You must be an Organization Administrator to manage regions and data planes.

-

You need a Kubernetes cluster on which your data plane can run. For example, if you want your data plane to run on eu-west-1, create an EKS cluster on eu-west-1.

-

You need to use a secrets manager for the connections on your data plane. Modifying the configuration of connector secret storage will cause all existing connectors to fail, so we recommend only using newly created workspaces on the data plane.

-

If you haven't already, get access to Airbyte's API by creating an application and generating an access token. For help, see Configuring API access.

Infrastructure prerequisites

For a production-ready deployment of self-managed data planes, you require the following infrastructure components. Airbyte recommend deploying to Amazon EKS, Google Kubernetes Engine, or Azure Kubernetes Service.

- Amazon

- Azure

| Component | Recommendation |

|---|---|

| Kubernetes Cluster | Amazon EKS cluster running on EC2 instances in 2 or more availability zones. |

| External Secrets Manager | Amazon Secrets Manager for storing connector secrets, using a dedicated Airbyte role using a policy with all required permissions. |

| Object Storage (Optional) | Amazon S3 bucket with a directory for log storage. |

| Component | Recommendation |

|---|---|

| Kubernetes Cluster | Azure Kubernetes Service cluster running in 2 or more availability zones. |

| External Secrets Manager | Azure Key Vault for storing connector secrets, using a dedicated Airbyte role using a policy with all required permissions. |

| Object Storage (Optional) | Azure Blob Storage with a directory for log storage. |

A few notes on Kubernetes cluster provisioning for self-managed data planes and Airbyte Enterprise Flex:

- We support Amazon Elastic Kubernetes Service (EKS) on EC2, Google Kubernetes Engine (GKE) on Google Compute Engine (GCE), or Azure Kubernetes Service (AKS) on Azure.

- While we support GKE Autopilot, we do not support Amazon EKS on Fargate.

We require you to install and configure the following Kubernetes tooling:

- Install

helmby following these instructions - Install

kubectlby following these instructions. - Configure

kubectlto connect to your cluster by usingkubectl use-context my-cluster-name:

Configure kubectl to connect to your cluster

- Amazon EKS

- GKE

- Configure your AWS CLI to connect to your project.

- Install eksctl.

- Run

eksctl utils write-kubeconfig --cluster=$CLUSTER_NAMEto make the context available to kubectl. - Use

kubectl config get-contextsto show the available contexts. - Run

kubectl config use-context $EKS_CONTEXTto access the cluster with kubectl.

- Configure

gcloudwithgcloud auth login. - On the Google Cloud Console, the cluster page will have a "Connect" button, with a command to run locally:

gcloud container clusters get-credentials $CLUSTER_NAME --zone $ZONE_NAME --project $PROJECT_NAME. - Use

kubectl config get-contextsto show the available contexts. - Run

kubectl config use-context $EKS_CONTEXTto access the cluster with kubectl.

We also require you to create a Kubernetes namespace for your Airbyte deployment:

kubectl create namespace airbyte

1. Create a region

The first step is to create a region. Regions are objects that contain data planes, and which you associate to workspaces.

Request

Send a POST request to /v1/regions/.

curl --request POST \

--url https://api.airbyte.com/v1/regions \

--header "Authorization: Bearer $TOKEN" \

--header "Content-Type: application/json" \

--data '{

"name": "aws-us-east-1",

"organizationId": "00000000-0000-0000-0000-000000000000"

}'

Include the following parameters in your request.

| Body parameter | Required? | Description |

|---|---|---|

name | Required | The name of your region in Airbyte. We recommend as best practice that you include the cloud provider (if applicable), and actual region in the name. |

organizationId | Required | Your Airbyte organization ID. To find this in the UI, navigate to Organizaton settings > General. |

enabled | Optional | Defaults to true. Set this to false if you don't want this region enabled. |

For additional request examples, see the API reference.

Response

Make note of your regionId. You need it to create a data plane.

{

"regionId": "uuid-string",

"name": "region-name",

"organizationId": "org-uuid-string",

"enabled": true,

"createdAt": "timestamp-string",

"updatedAt": "timestamp-string"

}

2. Create a data plane

Once you have a region, you create a data plane within it.

Request

Send a POST request to /v1/dataplanes.

curl -X POST https://api.airbyte.com/v1/dataplanes \

--header "Authorization: Bearer $TOKEN" \

--header "Content-Type: application/json" \

-d '{

"name": "aws-us-east-1",

"regionId": "00000000-0000-0000-0000-000000000000"

}'

Include the following parameters in your request.

| Body parameter | Required? | Description |

|---|---|---|

name | Required | The name of your data plane. For simplicity, you might want to name it based on the region in which you created it. |

regionId | Optional | The region this data plane belongs to. |

For additional request examples, see the API reference.

Response

Make note of your dataplaneId, clientId and clientSecret. You need these values later to deploy your data plane on Kubernetes.

json

{

"dataplaneId": "uuid-string",

"clientId": "client-id-string",

"clientSecret": "client-secret-string"

}

3. Configure Kubernetes Secrets

Your data plane relies on Kubernetes secrets to identify itself with the control plane.

In step 5, you create a values.yaml file that references this Kubernetes secret store and these secret keys. Configure all required secrets before deploying your data plane.

You may apply your Kubernetes secrets by applying the example manifests below to your cluster, or using kubectl directly. Ensure that the secrets manager configurtion on your data plane matches the configuration on the control plane. At this time, only access key authentication is supported.

While you can set the name of the secret to whatever you prefer, you need to set that name in your values.yaml file. For this reason it's easiest to keep the name of airbyte-config-secrets unless you have a reason to change it.

airbyte-config-secrets

- AWS

- Azure

apiVersion: v1

kind: Secret

metadata:

name: airbyte-config-secrets

type: Opaque

stringData:

# Insert the data plane credentials received in step 2

DATA_PLANE_CLIENT_ID: your-data-plane-client-id

DATA_PLANE_CLIENT_SECRET: your-data-plane-client-secret

# Only set these values if they are also set on your control plane

AWS_SECRET_MANAGER_ACCESS_KEY_ID: your-aws-secret-manager-access-key

AWS_SECRET_MANAGER_SECRET_ACCESS_KEY: your-aws-secret-manager-secret-key

S3_ACCESS_KEY_ID: your-s3-access-key

S3_SECRET_ACCESS_KEY: your-s3-secret-key

Apply your secrets manifest in your command-line tool with kubectl: kubectl apply -f <file>.yaml -n <namespace>.

You can also use kubectl to create the secret directly from the command-line tool:

kubectl create secret generic airbyte-config-secrets \

--from-literal=license-key='' \

--from-literal=data_plane_client_id='' \

--from-literal=data_plane_client_secret='' \

--from-literal=s3-access-key-id='' \

--from-literal=s3-secret-access-key='' \

--from-literal=aws-secret-manager-access-key-id='' \

--from-literal=aws-secret-manager-secret-access-key='' \

--namespace airbyte

airbyteUrl: https://cloud.airbyte.com # Base URL for the control plane so Airbyte knows where to authenticate

dataPlane:

# Used to render the data plane creds secret into the Helm chart.

secretName: airbyte-config-secrets

id: "preview-data-plane"

# Describe secret name and key where each of the client ID and secret are stored

clientIdSecretName: airbyte-config-secrets

clientIdSecretKey: DATA_PLANE_CLIENT_ID

clientSecretSecretName: airbyte-config-secrets

clientSecretSecretKey: DATA_PLANE_CLIENT_SECRET

# Secret manager secrets/config

# Must be set to the same secrets manager as the control plane

secretsManager:

secretName: airbyte-config-secrets

type: AZURE_KEY_VAULT

azureKeyVault:

vaultUrl: ## https://my-vault.vault.azure.net/

tenantId: ## 3fc863e9-4740-4871-bdd4-456903a04d4e

clientId: ""

clientIdSecretKey: ""

clientSecret: ""

clientSecretSecretKey: ""

4. Create your deployment values

Add the following overrides to a new values.yaml file.

airbyteUrl: https://cloud.airbyte.com # Base URL for the control plane so Airbyte knows where to authenticate

dataPlane:

# Used to render the data plane creds secret into the Helm chart.

secretName: airbyte-config-secrets

id: "preview-data-plane"

# Describe secret name and key where each of the client ID and secret are stored

clientIdSecretName: airbyte-config-secrets

clientIdSecretKey: DATA_PLANE_CLIENT_ID

clientSecretSecretName: airbyte-config-secrets

clientSecretSecretKey: DATA_PLANE_CLIENT_SECRET

# S3 bucket secrets/config

# Only set this section if you are using a self-managed bucket, otherwise it can be omitted.

storage:

secretName: airbyte-config-secrets

type: "s3"

bucket:

log: my-bucket-name

state: my-bucket-name

workloadOutput: my-bucket-name

s3:

region: "us-west-2"

authenticationType: credentials

accessKeyIdSecretKey: S3_ACCESS_KEY_ID

secretAccessKeySecretKey: S3_SECRET_ACCESS_KEY

# Secret manager secrets/config

# Must be set to the same secrets manager as the control plane

secretsManager:

secretName: airbyte-config-secrets

type: AWS_SECRET_MANAGER

awsSecretManager:

region: us-west-2

authenticationType: credentials

accessKeyIdSecretKey: AWS_SECRET_MANAGER_ACCESS_KEY_ID

secretAccessKeySecretKey: AWS_SECRET_MANAGER_SECRET_ACCESS_KEY

5. Deploy your data plane

In your command-line tool, deploy the data plane using helm upgrade. The examples here may not reflect your actual Airbyte version and namespace conventions, so make sure you use the settings that are appropriate for your environment.

helm upgrade --install airbyte-enterprise airbyte/airbyte-data-plane --version 1.8.1 --values values.yaml

helm upgrade --install airbyte-enterprise airbyte/airbyte-data-plane --version 1.8.1 -n airbyte-dataplane --create-namespace --values values.yaml

6. Associate a region to a workspace

One you have a region and a data plane, you need to associate that region to your workspace. You can associate a workspace with a region when you create that workspace or later, after it exists.

You can only associate each workspace with one region.

- UI

- API

Follow these steps to associate your region to your current workspace using Airbyte's user interface.

-

In the navigation panel, click Workspace settings > General.

-

Under Region, select your region.

-

Click Save changes. Now, run any sync. You will see the workloads spin up in the new data plane you've configured.

When creating a new workspace: Send a POST request to /v1/workspaces/ Include the following parameters in your request. For additional request examples, see the API reference.Request

curl -X POST "api.airbyte.com/v1/workspaces" \

--header "Authorization: Bearer $TOKEN" \

--header "Content-Type: application/json" \

-d '{

"name": "My New Workspace",

"dataResidency": "auto"

}'Body parameter Description nameThe name of your workspace in Airbyte. dataResidencyA string with a region identifier you received in step 1. Response

{

"workspaceId": "uuid-string",

"name": "workspace-name",

"dataResidency": "auto",

"notifications": {

"failure": {},

"success": {}

}

}

When updating a workspace: Send a PATCH request to /v1/workspaces/ Include the following parameters in your request. For additional request examples, see the API reference.Request

{workspaceId}.curl -X PATCH "https://api.airbyte.com/v1/workspaces/{workspaceId}" \

--header "Authorization: Bearer $TOKEN" \

--header "Content-Type: application/json" \

-d '{

"name": "Updated Workspace Name",

"dataResidency": "us-west"

}'Body parameter Description nameThe name of your workspace in Airbyte. dataResidencyA string with a region identifier you received in step 1. Response

{

"workspaceId": "uuid-string",

"name": "updated-workspace-name",

"dataResidency": "region-identifier",

"notifications": {

"failure": {},

"success": {}

}

}

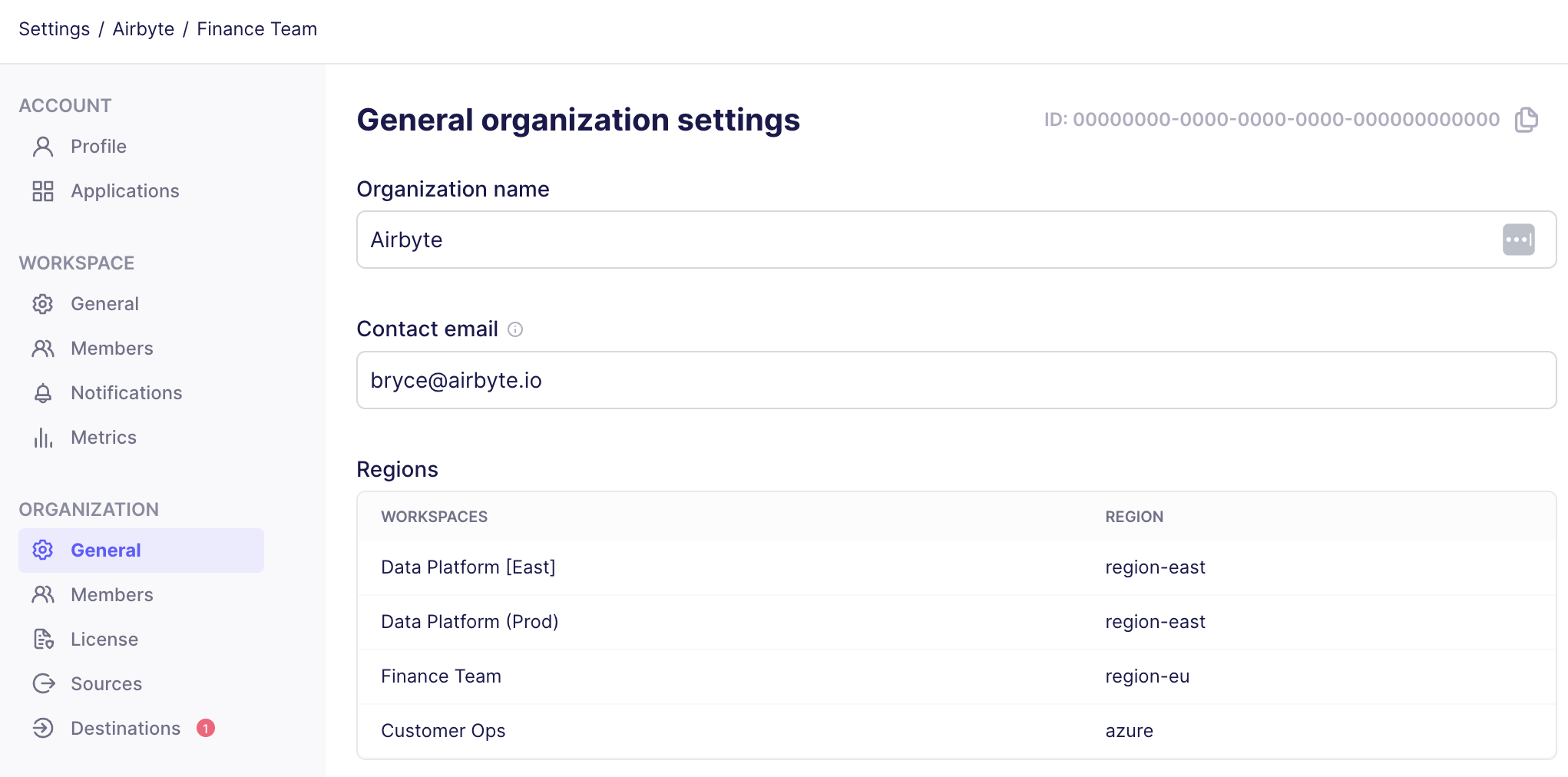

Check which region your workspaces use

- UI

- API

You can see a list of your workspaces and the region associated to each from Airbyte's organization settings.

- In Airbyte's user interface, click Workspace settings > General. Airbyte displays your workspaces and each workspace region under Regions.

Request:

bash

curl -X GET "https://api.airbyte.com/v1/workspaces/{workspaceId}" \

-H "Authorization: Bearer YOUR_ACCESS_TOKEN" \

-H "Content-Type: application/json"

Response:

{

"workspaceId": "18dccc91-0ab1-4f72-9ed7-0b8fc27c5826",

"name": "Acme Company",

"dataResidency": "auto",

}